65 - Machine Translation

Translation by machines to perfectly transform text into another language.

Language translation by machines is since decades one of the most important NLP-tasks, because all things start by understanding each other without barriers. Google Translate still has shortcomings and is the absolute leader, but Facebook is in the race with it’s multilingual machine translation model M2M-100.

However, custom-build models are within range with the arrival of Neural Machine Translation implementations, which provide sequence-to-sequence models and Parallel Corpora like Paracrawl and Opus.

With the growing quality of Machine Translation models, there is also an opportunity to better translate training datasets into another language. The English language is almost always used for NLP-blogs, model demo’s and SOTA leaderboards. These superior resources might benefit you for other languages.

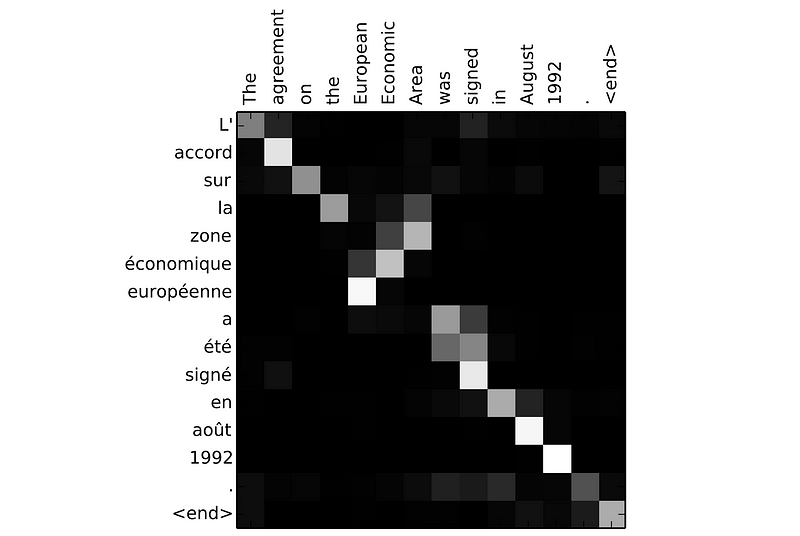

In the figure below a Word Alignment matrix from a Neural Machine Translation task. Each pixel shows the weight of the annotation and explains which positions in the source sentence were considered more important when generating the target word.

This article is part of the project Periodic Table of NLP Tasks. Click to read more about the making of the Periodic Table and the project to systemize NLP tasks.